I’m going to halve my publication output. You should consider slow science, too

Summary

Adrian Barnett, a professor of statistics and meta-researcher, announces a personal rule to cap his publications at seven papers a year — roughly half his recent output — as part of a deliberate move towards “slow science”. He will spend about twice as long on each paper to improve rigour, stakeholder consultation, background reading and interpretation for real-world public-health impact. Barnett argues the research system is overloaded: publication numbers have surged, peer review and reading capacity are stretched, and overall quality appears to be declining. He links these problems to perverse career incentives that reward quantity over quality and notes emerging shortcuts such as low-quality LLM-generated papers and paper mills.

Key Points

- Barnett will limit his publications to seven papers per year to prioritise quality over quantity.

- He intends to double the time spent per paper for better scholarship, stakeholder input and testing.

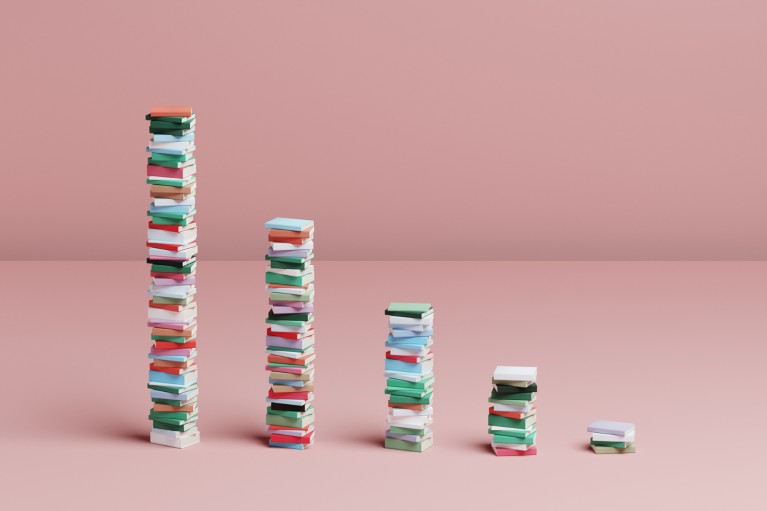

- Publication inflation is severe: PubMed indexed articles rose from ~1.2 million (2014) to ~1.7 million (2024), straining the system.

- Hyper-prolific authors now publish >60 papers a year, and peer review/readership capacity cannot keep up.

- Quality is suffering: more low-quality papers pass peer review and problematic practices (LLM misuse, paper mills) are emerging.

- Systemic incentives push early-career researchers to prioritise CV length over robust science.

- Slow science and prior proposals (for example, lifetime word limits) aim to rebalance the emphasis from speed to rigour.

Content summary

Barnett recounts his own publishing trajectory and the cultural pressure in academia to increase output. He sets out his personal threshold of seven papers a year as a tool to force deeper engagement with each project, not to work less but to work differently: more reading, more consultation, more rigorous testing and clearer translation of results for public health practice.

He presents evidence and arguments that the current publication boom is unsustainable and harmful. Growing article volumes make it impossible for researchers to read and review the literature thoroughly, and this overload is associated with a rise in low-quality publications. The pressure to publish is driven by hiring and funding systems that value numbers, which disadvantages early-career researchers and encourages shortcuts.

Context and relevance

This piece speaks directly to academics, research managers, funders and institutions. It connects to broader debates about research integrity, peer-review capacity, reproducibility and the ethics of incentives. With mounting calls for reform from publishers and meta-researchers, Barnett’s personal experiment models a behavioural change that could be scaled by institutions if evaluation criteria shifted away from raw output toward demonstrable quality and impact.

Why should I read this?

Because if you’re tired of the publish-or-perish treadmill, this is a short, candid case study of someone doing something about it. It’s practical, grounded in evidence and full of things to argue about at your next faculty meeting — plus it points to concrete problems (and fixes) that affect hiring, funding and the trustworthiness of science.

Author style

Punchy. Barnett’s piece is direct and action-oriented: he explains a personal rule, backs it with data, and uses his seniority to spotlight systemic problems. For readers in research leadership or policy roles, the article reads like a prompt to act rather than a mere opinion.