The AI bias playbook: Mitigation strategies for CIOs

Summary

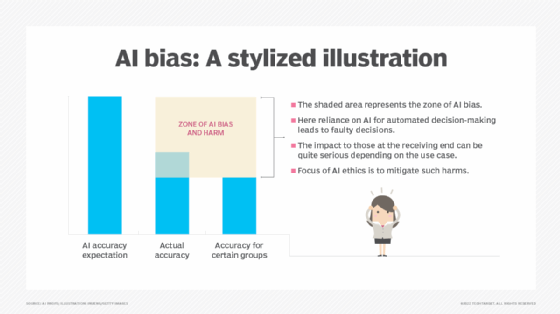

This article explains why AI bias is a growing boardroom concern and outlines practical mitigation strategies CIOs can use as part of enterprise AI governance. It distinguishes algorithmic and data bias, shows how biased training data can produce harmful outcomes (for example, skin cancer models less accurate on darker skin tones and recruiting tools biased against women), and stresses that bias often creeps in through incomplete, siloed or poorly curated data.

The piece argues that CIOs are well placed to lead anti-bias efforts because of their cross-organisational reach. It sets out three concrete priorities for CIOs: prioritise data management, cultivate cross-team collaboration and embed governance from the start to reduce bias risk and improve model accuracy.

Key Points

- AI bias arises from both algorithmic faults and skewed training or input data; both lead to inaccurate or discriminatory outcomes.

- High-profile examples (e.g., biased skin-cancer detection and recruiting tools) show how unrepresentative data causes real harm and reputational risk.

- CIOs are uniquely positioned to organise cross-functional AI governance because they touch all parts of the organisation.

- Three practical mitigation strategies: prioritise data management (audits, lineage, representative datasets), cultivate cross-team collaboration (data science, legal, compliance, business leaders) and govern from the outset (risk assessment at design, not post-deployment).

- Treating bias as a core risk in enterprise risk and model risk management reduces costly model failures and protects brand trust.

Context and relevance

With increasing enterprise AI adoption and rising regulation, 2026 is shaping up as a year of intensified AI governance. The article is relevant for CIOs, CISOs, data scientists and business leaders who must balance rapid AI deployment with accountable, ethical outcomes. It connects to ongoing trends: stronger regulatory expectations, the need for explainability and the commercial imperative to avoid model mistakes that damage customers and the bottom line.

Why should I read this?

Quick version: if you care about stopping AI from quietly making the wrong calls — and you don’t want bias to blow up into a compliance, ethical or financial headache — this is worth your ten minutes. It gives straight-up, practical moves (data audits, cross-team accountability, governance at design) that a CIO can action now to reduce risk and improve model reliability.

Author style

Punchy: this isn’t academic fluff. The piece is action-oriented and aimed at leaders who need to organise people, data and governance to curb bias before it compounds into bigger problems. If you’re responsible for AI outcomes, the nuance here matters — and the recommended steps are ones you can start applying straightaway.