A large-scale coherent 4D imaging sensor

Summary

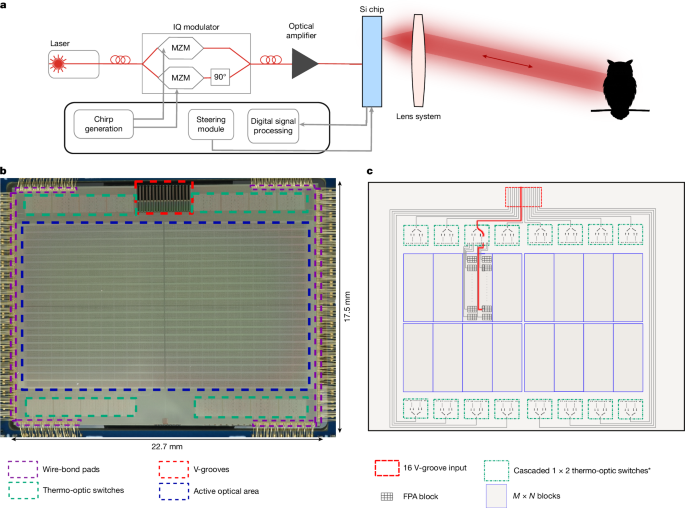

This Nature paper presents a monolithically integrated frequency-modulated continuous-wave (FMCW) LiDAR focal-plane array (FPA) — a camera-like, coherent 4D imaging sensor built on a silicon-photonics/RF CMOS process. The chip contains a 352 × 176 pixel array with on-chip transmit/receive optics, balanced germanium photodiodes, pixel-integrated TIAs and a thermo-optic switching tree that steers light across pixel blocks. The system demonstrates simultaneous range and radial-velocity measurements (4D imaging), achieving 65 m radial range on 30% reflective targets with 46 nJ per point and an angular resolution of 0.06° using commercial SWIR lenses. The design is modular and scalable, with on-chip calibration and a path to greater range and manufacturability through Si–SiN hybrid approaches and LO/pixel optimisations.

Key Points

- Monolithic FMCW coherent LiDAR FPA: 352 × 176 pixels with integrated photonics and CMOS electronics at the pixel level.

- Monostatic coherent pixel architecture: heterodyne detection with balanced photodiodes and on-chip TIAs to reject common-mode noise.

- Solid-state on-chip beam steering: thermo-optic switch tree (multi-stage) provides sequential illumination of pixel rows and built-in calibration via monitor photodiodes.

- Performance: 65 m radial detection on ~30% reflectivity at 46 nJ per point and mean on-target power ≈178 μW per pixel; angular resolution 0.06°; potential 15 fps with full parallel readout.

- 4D capability: FMCW up/down chirps provide simultaneous distance and radial velocity (velocity resolution demonstrated down to 0.037 m s−1 in related schemes).

- Scalability and manufacturability: first large-scale coherent FPA with on-chip electronics, offering a cost structure closer to CMOS cameras for mass markets.

- Path to improvement: increasing LO power/TIA gain to reach shot-noise limit (≈+5.6 dB SNR) and Si–SiN architectures to overcome nonlinear silicon limits — potential range >200 m.

Content summary

The authors developed an FMCW LiDAR camera-like sensor where a diode laser (1,310 nm) is chirped via an on-chip IQ modulator. The modulated light is distributed through 16 fibre inputs into a hierarchical thermo-optic switch network that feeds blocks of pixels. Each 8-pixel row transmits and receives in parallel, using grating coupler pairs and a 50/50 coupler to mix return light with the local oscillator for heterodyne detection. On-chip concave microlenses increase coupling efficiency and the pixel-integrated electronics (TIAs, pad drivers) multiplex signals off-chip for digitisation and processing on an RFSoC. The demonstrated system used off-the-shelf SWIR lenses to set FOV and showed detailed point clouds at close and long ranges. Measured SNR is limited by amplifier noise (mean shot-to-amplifier ratio κ = 0.62, ≈ −5.6 dB penalty); straightforward LO/pixel design changes should reach shot-noise-limited operation. Loss budget, emission characterisation, Rayleigh range, imaging trade-offs and a full experimental methods pipeline are included, plus data and code access via Dryad DOI.

Context and relevance

This work sits at the intersection of silicon photonics, integrated electronics and LiDAR systems. It addresses a major industry trend: moving LiDAR from bulky scanned or high-power systems toward compact, low-power, mass-producible solid-state devices that behave like a camera for 4D sensing. The monolithic integration of optics and CMOS electronics reduces size, improves stability and promises lower cost and higher reliability for markets such as autonomous vehicles, robotics, mapping, AR/VR and consumer devices. The demonstrated pixel count and on-chip electronics make this the largest coherent FPA reported to date, and the modular approach gives a clear roadmap for pushing range, SNR and frame rate further through packaging, lens optimisation and Si–SiN hybridisation.

Why should I read this?

Short version: if you care about LiDAR actually becoming cheap, compact and camera-like, this is the paper. Big step — they’ve crammed a hundreds-of-thousands-component coherent LiDAR onto a single chip, shown real 4D point clouds and sketched practical upgrades to get much more range. Saves you digging through a dozen niche photonics papers — this one ties integration, performance and manufacturability together.

Source

Article date: 11 March 2026

Source: https://www.nature.com/articles/s41586-026-10183-6

Author style note

Punchy: this is presented as a concrete step towards a CMOS-camera equivalent for multidimensional imaging — important for anyone tracking how LiDAR will scale beyond specialised markets.