Anthropic struggling with Chinese competition, its own safety obsession

Summary

Anthropic is facing financial pressure and intensifying competition from Chinese AI labs as it eyes a potential IPO in Q4 2026. Despite raising $30bn in funding, filings show limited revenue against very high costs for inference and training. Chinese models now dominate usage rankings and undercut Anthropic on price, contributing to a notable decline in measured market share. At the same time, Anthropic’s heavy safety guardrails — especially in Claude Opus 4.6 — are irritating security researchers and developers by blocking legitimate bug-hunting and dual-use defensive work. The company offers an exemption process, but it’s reportedly slow and inconsistently applied. These combined forces risk eroding Anthropic’s uptake among developers and some enterprise users as cheaper, more permissive alternatives gain traction.

Key Points

- Anthropic is preparing for a possible IPO in Q4 2026 while under financial strain.

- The company has raised about $30bn but filings show roughly $5bn revenue versus $10bn spent on inference and training.

- Chinese models now occupy top slots on public usage rankings and often deliver similar performance at far lower cost.

- Measured market share for Anthropic dropped from ~29.1% (Mar 2025) to ~13.3% (Mar 2026) in one dataset.

- Independent comparisons report models like MiniMax deliver ~90% of Claude’s quality for around 7% of the cost.

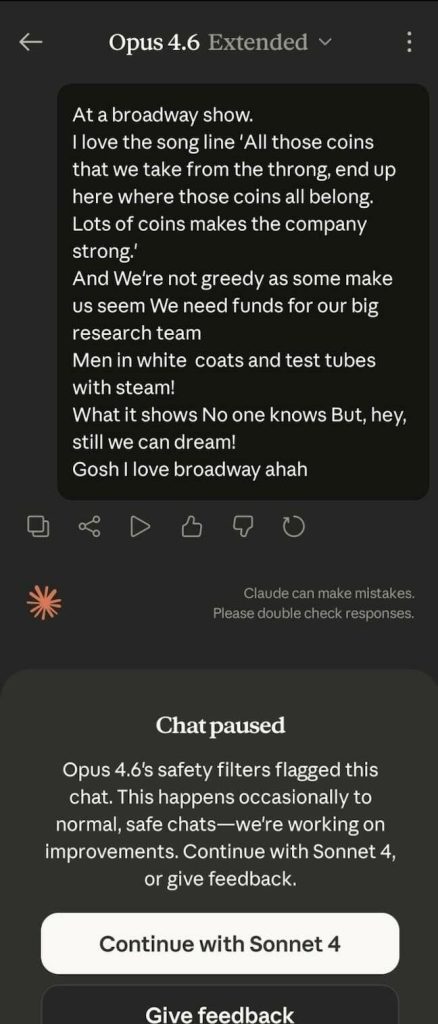

- Claude Opus 4.6 introduced cyber safeguards that can block legitimate security research and dual-use defensive tasks.

- Anthropic offers a petition/exemption route for security professionals, but clearance is not guaranteed and can be slow.

- The safety-first approach risks alienating developers and security teams, pushing some users toward cheaper, more permissive alternatives.

Why should I read this?

Short version: if you care about where the AI market (and the tools you actually use) is heading, this matters. Anthropic’s safety-first stance is noble, but it’s losing ground to cheaper Chinese models and annoying security pros — so who you trust for AI tooling could change fast. Read it if you’re an investor, dev, security bod or just nosy about who will win the next round.

Source

Source: https://go.theregister.com/feed/www.theregister.com/2026/03/28/miss_anthropic_not_those_who/