Reproducibility and robustness of economics and political science research

Article meta

Article Date: 01 April 2026

Article URL: https://www.nature.com/articles/s41586-026-10251-x

Article Image:

Summary

This large collaborative study reproduced analyses and ran robustness checks on 110 articles from leading economics and political science journals that have mandatory data and code-sharing policies. The authors report high computational reproducibility and broadly stable effect sizes, but robustness to alternative analyses is imperfect and varies by reanalysis team.

Key Points

- Sample: 110 published papers from top economics and political science journals with mandatory data/code sharing.

- Computational reproducibility: more than 85% of published claims could be reproduced from the provided materials.

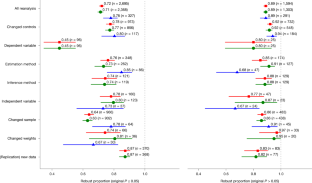

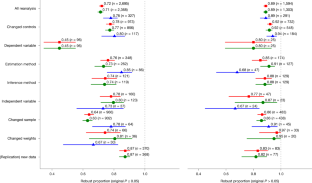

- Robustness: 72% of statistically significant estimates remained significant and in the same direction in robustness checks.

- Effect sizes: median reproduced effect size is nearly identical to the original (about 99% of the published effect size).

- Experience matters: research teams with more experience reported lower robustness rates.

- No clear link between robustness and authors’ characteristics or mere data availability — sharing helps, but isn’t a full guarantee.

- All data and code for this project are available on Zenodo and OSF (replication package links provided in the article).

Content summary

The paper distinguishes computational reproducibility (can the reported numbers be produced from the supplied code and data?) from analytical robustness (do key findings hold under sensible alternative choices?). Using a coordinated, multi-team effort, the authors ran a two-stage exercise: push-button reproductions of results and a set of pre-specified robustness checks on key estimates. The main findings are that reproducibility is high where journals require sharing, but robustness checks reveal that nearly 30% of significant results are sensitive to reasonable alternative specifications. The study also included a targeted analysis of determinants of robustness and found that more experienced reanalysts tended to find more problems, while author traits and simple measures of data availability were poor predictors of robustness.

Context and relevance

Reproducibility and robustness are central to cumulative science and policy-relevant social science. This study provides strong evidence that mandatory sharing policies materially improve computational reproducibility in economics and political science. However, the gap between reproducibility and robustness highlights that making code and data available is necessary but not sufficient: analytic choices still drive uncertainty. The work complements recent large-scale reproducibility projects in finance, psychology and management science and feeds into debates about incentives, peer review, and the value of formal replication exercises.

Author style

Punchy. This is not just another methods paper — it’s a heavyweight, collaborative reality check. If you care about whether published quantitative results in economics and political science can be trusted for policy or follow-up work, the details here matter. The headline (high reproducibility) is reassuring, but the nuance (substantial sensitivity in robustness checks; experienced teams flagging more issues) is crucial for researchers, editors and funders. Read the methods and supplementary materials if you plan to rely on or extend empirical findings.

Why should I read this?

Short version: because it tells you how much you can actually trust published results. The paper sifts the hype from the hard facts — yes, most analyses can be rerun if journals require it, but nearly a third of significant findings wobble under reasonable alternative checks. If you’re doing, using or funding empirical work, this saves you the time of digging through dozens of replication folders yourself.

Practical takeaways

- Journals’ mandatory sharing policies appear to work: they greatly increase computational reproducibility.

- Insist on robustness checks, not just push-button reproducibility, before treating marginally significant findings as settled.

- Support for more systematic, resourced replication efforts and clearer reporting standards will improve confidence in results.

- Consult the article’s Zenodo/OSF replication package if you need the actual code/data or want to reproduce their reproductions.

Data & code

Data and code for this reproducibility project are available on Zenodo and OSF (links and the preanalysis plan are provided in the Nature article).