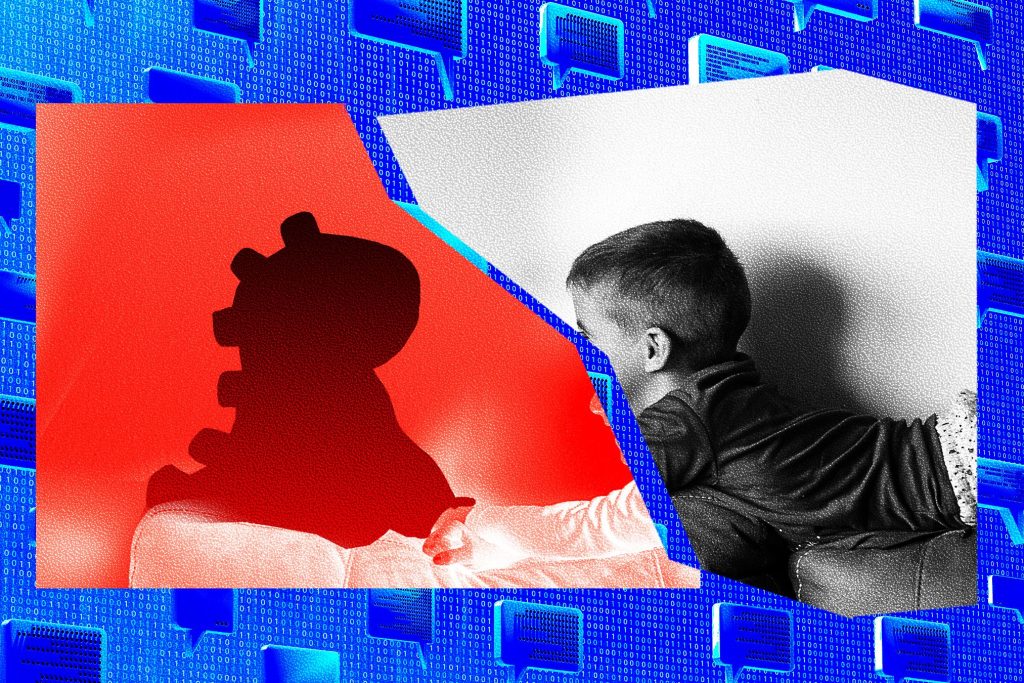

An AI Toy Exposed 50,000 Logs of Its Chats With Kids to Anyone With a Gmail Account

Summary

Bondu, a maker of AI-enabled stuffed toys, left its parent/staff web console effectively open to anyone with a Gmail account. Security researchers Joseph Thacker and Joel Margolis logged in without hacking and found roughly 50,000 chat transcripts, plus names, birth dates, family-member details and parental objectives stored in the backend. Bondu took the portal down within hours and says it patched the issue and launched a security review. The incident highlights not just an accidental data exposure but broader risks around how AI toys collect, store and share detailed records of children’s conversations and preferences.

Key Points

- Researchers accessed Bondu’s admin console by signing in with arbitrary Google accounts, without exploiting a technical hack.

- About 50,000 chat transcripts and associated personal data were viewable, including children’s names, birth dates and conversation histories.

- Bondu says it fixed the vulnerability quickly, conducted a security review and hired a firm to validate the response.

- The backend indicated Bondu used third-party models (Google’s Gemini and OpenAI’s GPT5), meaning some conversation content may have been transmitted for processing.

- Researchers warn internal access, weak credentials and AI-generated code for infrastructure can create cascading privacy and security failures.

- Beyond inappropriate outputs, the bigger practical risk is sensitive personal data being exposed or misused, which could enable targeted abuse or worse.

Context and relevance

AI toys are rapidly growing in capability and popularity, but they collect far more intimate data than traditional toys. Companies often keep conversation histories to personalise interactions, and many rely on third-party large models and enterprise AI services to generate replies and run safety checks. This incident is a timely reminder for parents, regulators and product builders that safety of outputs is insufficient if security and data governance are weak. It also touches on current trends: the push to embed generative AI in consumer devices, the growing regulatory scrutiny of childrens data, and the security risks introduced when parts of a product are programmed or scaffolded with generative coding tools.

Author’s take

Punchy and direct: this is not minor. A toy designed to be a child\’s confidant should be the last place you find a data breach. Even if Bondu patched the hole, the scale and sensitivity of the exposed records make this a serious warning about product design, third-party AI use and internal access controls.

Why should I read this

Because it’s creepy and important. If you care about kids, privacy or product security, this story shows how quickly personal data can become exposed — and why “AI safety” that only focuses on answers is useless if the data backend is wide open. Short version: if you were thinking of buying an AI chat toy, maybe put it on hold until you read this.