Investigating the replicability of the social and behavioural sciences

Summary

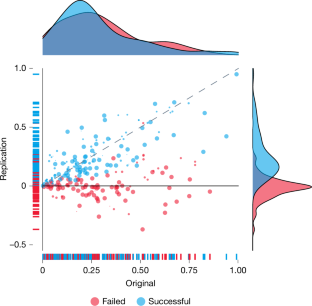

This large-scale SCORE replication project attempted to replicate 274 positive claims drawn from 164 papers (2009–2018) across 54 journals in the social and behavioural sciences. Replication attempts were generally highly powered (median 99.6%), used original materials where possible, and followed a standardised, peer-reviewed protocol. Results: 151 out of 274 claims (55.1%) showed a statistically significant pattern consistent with the original claim; weighed by papers, 80.8 of 164 papers (49.3%) replicated. Effect sizes fell: median Pearson’s r dropped from 0.25 (original) to 0.10 (replication), an ~82% reduction in shared variance. Different replication success metrics yielded widely varying estimates (28.6%–74.8%, median ~49.3%), and discipline-level rates varied modestly (approx. 42.5–63.1%). Data and code are publicly available via OSF.

Key Points

- Scope: 274 claims from 164 papers across 54 journals were targeted for replication.

- Overall replication: 55.1% of claims and 49.3% of papers showed statistically significant replication under the primary assessment.

- Effect-size decline: median Pearson’s r fell from 0.25 (original) to 0.10 (replication), indicating substantial reduction in shared variance.

- Power and methods: replications were high-powered (median ~99.6%) and pre-reviewed; nevertheless outcomes varied by metric and discipline.

- Replication metrics disagree: 13 different binary assessments produced replication-rate estimates from about 28.6% to 74.8% (median ~49.3%).

- Transparency: materials, data and code are hosted in a living OSF repository (DOI: https://doi.org/10.17605/OSF.IO/G5SNY) and an archived snapshot (https://doi.org/10.17605/OSF.IO/BZFGY).

- Conclusion: replicability challenges are widespread across social and behavioural fields; understanding conditions that help or hinder replicability is essential.

Content summary

The study coordinated many independent replication teams to re-run or reanalyse original claims using standard protocols. Replication success was assessed with multiple methods and thresholds, revealing that whether a claim “replicates” depends substantially on the metric used. Some decline in significance and effect size was expected (regression to the mean, selection of positive results), but the observed reductions were notable. The paper provides extensive supplementary figures and tables showing variation by discipline, year and journal, and offers open access to materials and code so others can inspect or extend the work.

Practical outputs include: a large curated dataset of replication attempts, a repository with push‑button code to reproduce analyses and figures, and diagnostics showing where replications failed or succeeded relative to effect-size differences. The authors discuss methodological reasons for variation, the role of publication and selection biases, and recommend continued efforts to identify conditions that improve robustness.

Context and relevance

This is a major, field-spanning meta-research effort from the SCORE programme and Centre for Open Science, building on prior “many labs” and replication projects. It provides the most comprehensive cross-disciplinary snapshot to date of replicability in social and behavioural research and highlights that (a) single summaries of replication rates can be misleading because they depend on metric choice, and (b) even well-powered, preregistered replications often find smaller effect sizes than originally reported. For researchers, funders and journals, the paper supplies empirical evidence to guide improvements in study design, transparency and incentives.

Author style

Punchy: this paper matters. It’s a big, meticulously executed project with openly shared data and code — the kind of evidence you should use when arguing for better research practices, larger samples or preregistration in social and behavioural science. Read the methods and supplementary OSF resources if you work in these fields: they contain actionable material and reproducible workflows.

Why should I read this?

Short version: if you care whether social‑science findings hold up, this is the showpiece study. It’s packed with real numbers, open data and clear signals that replication outcomes vary a lot depending on how you measure them — so it saves you the legwork of wading through dozens of smaller replication reports yourself.